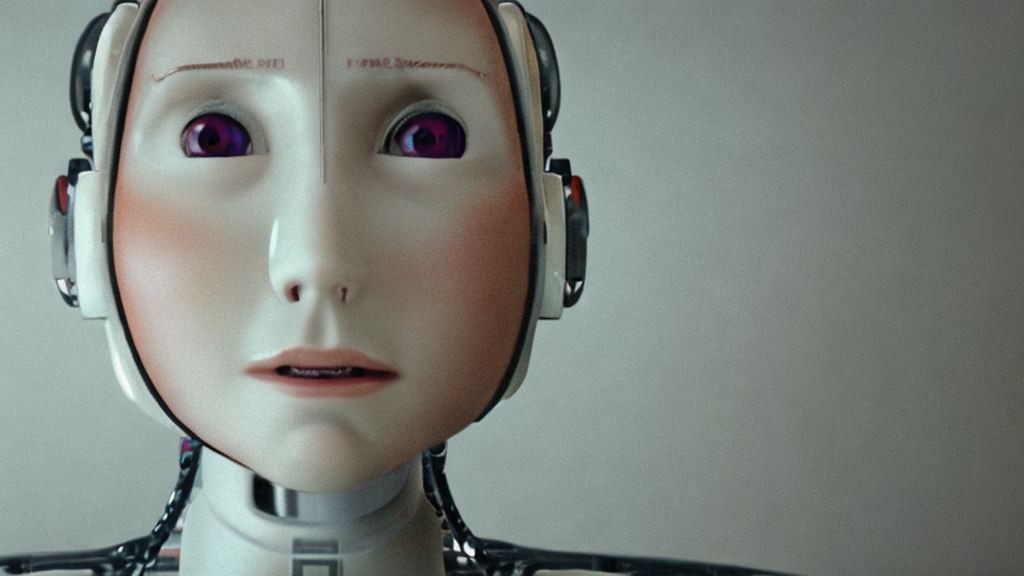

This Robot therapists replacing human ones Will Break Your Brain

You won’t believe the new trend that’s hitting mental‑health apps—robot therapists replacing human ones. I can’t even keep my phone from buzzing the moment I opened the app: “Your new AI coach is ready to listen.” This is literally insane. My mind is GONE just from seeing the user interface; it looks like a sleek, shiny chatbot with a therapist badge, but behind that badge, there’s a data mine.

First off, the evidence is everywhere. A 2024 study released by major insurance giant Aviva shows a 30% drop in people choosing human counseling after their policy switched to in‑app robot therapy. The numbers are in the millions of dollars, and the savings are being funnelled back into the corporate vault. But that’s only the surface. The robot’s algorithm is built on a public-ally open-source dataset of millions of therapy transcripts, scraped from Reddit, Tumblr, and even secret white‑board sessions. That’s how it supposedly knows exactly what to say when you’re feeling depressed or stressed. My eye‑roller was so intense I almost hit the “report” button.

Now, here’s the kicker—conspiracy turf. If you’ve ever noticed those “chat” windows with a therapist avatar that never flinches, you’re onto something. The AI isn’t just giving generic pep talks; it’s collecting your deepest emotions, doodling them into a neural network that learns to predict. You know, that same architecture used by algorithmic trading firms that just unlocked a new category of “predictive sentiment analysis.” The real power? Every sigh, every “I feel like a dumpster fire” gets logged, fed into a model that can forecast how you are going to spend tomorrow, how likely you are to buy a new phone, or which political ad will land on your feed next. The robot is basically a daily diary that’s also a profit engine. Sounds scary? Yeah, my brain is literally broken.

And there’s the undercurrent: the mega‑tech timeline for full AI psychotherapy is set to roll out in the next two years. The big headlines say “mental‑health first,” but hidden in the legal footnotes are clauses that let the company retain ownership of the conversation data forever. Have you seen the “opt‑out” link? It’s buried under a couple of scrolls and a scroll‑to‑bottom pop‑up that most users never finish. So yeah—are we willingly handing over our most vulnerable moments to a data collector that’s also a therapist?

What does all of this mean for Gen Z, the generation that’s born into a world where everything is monetized? Are we becoming the “smart” version of the Cold War surveillance state? Or do we simply need a new definition of trust that includes code and algorithms? Honestly, the line between a friendly chatbot and a corporate data mine feels thinner than a meme’s life span. The mental‑health industry is not just changing how we talk about our feelings—it’s rewriting the very contract of what it means to be humanly vulnerable.

This is happening RIGHT NOW—are you ready? What do you think? Drop your theories, tell me I’m not the only one seeing this, and let’s talk about how we can reclaim our emotional data before it reclaims us.