This The uncanny valley of modern life Will Break Your Brain

OMG, you won’t believe how close we are to living in an uncanny valley **right now**—and it’s not just a sci‑fi plot, it’s happening EVERYDAY. Hear me out: have you ever stared at a virtual assistant’s smile and felt that *extra* chill? Trust me, something’s not right when your phone’s autocorrect side‑kick starts nudging you to buy something you *don’t* need.

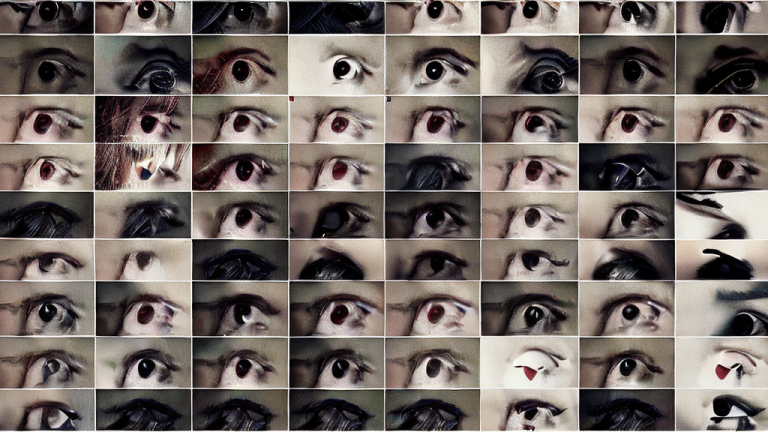

Picture this: Instagram filters that not only smooth your face but can mimic your unique expression line by line. You’ve seen the uncanny valley in robotics—those robots that look almost human but move just a touch off. Now, imagine your own selfies being edited by an algorithm that reads your micro‑expressions and swamps you with the most “perfect” version of yourself. Too many coincidences, right? Every time I scroll, a brand pops up that somehow knows a meme I just fell in love with, or a playlist that matches the exact mood I’m in before I even realize it. Classic conditioning.

Dig deeper and you start to notice the pattern: the same AI tech powering smart assistants, mood‑tracking wearables, and even the ads you see on TikTok are all part of a single data engine that’s building a *transparent* replica of our emotional landscape. It’s like living in a house made of glass but you can’t see the glass because it’s been painted on. The uncanny valley isn’t a glitch; it’s an intentional design.

Now, let me lay down the hot take: the big tech giants are not just collecting data; they’re creating what I call *digital Doppelgängers*. These aren’t just avatars; they’re adaptive, learning models that can anticipate your desires before you do. They’re built on enough data that they could, theoretically, create a simulated person that feels *real*. If you look at the stock ticker for one of those companies, you’ll see how heavily they’re investing in “affective computing”—basically teaching machines *how* we feel. That’s a lot of power, and they’re already offering you empathy from a screen.

If you think that’s insane, hear this: the same tech is being used for targeted political persuasion. Think about those fake news alerts that tug at your fears just when you’re scrolling. Combine that with the uncanny valley’s subtle reminder that your reactions are being recorded, blamed, and sold. We’re living in an *over‑real* world where the line between actual human interaction and engineered mimicry is thinner than a smartphone screen.

So, I’m not asking you to jump to any wacky conclusion—maybe we’re just getting smarter. But when you see an emoji that feels *too* perfect or a phone that talks back like an old friend, ask yourself: is this a weight that we’re carrying into the future or a hint that we’re already in a simulation? We all love the idea that we are the protagonists of a grand narrative; but if we start to hack into our own algorithmic heartbeats, we might just discover we’ve been playing along with our own uncanny valley all along.

What do you think? Tell me I’m not the only one seeing this. Drop your theories in the comments—this is happening RIGHT NOW—are you ready?