This Robot therapists replacing human ones Will Break Your Brain

Yo, you ever think about how cool and freaky it is that therapists are getting replaced by robots? Like, I was scrolling through TikTok at 3 AM when a video popped up claiming that by 2025, 73% of therapy sessions will be handled by AI bots that can read your micro-expressions, calculate your cortisol levels via wearables, and even prescribe serotonin boosters—without the awkward “so what’s your favorite pizza topping” filler. I CAN’T EVEN. This is literally insane.

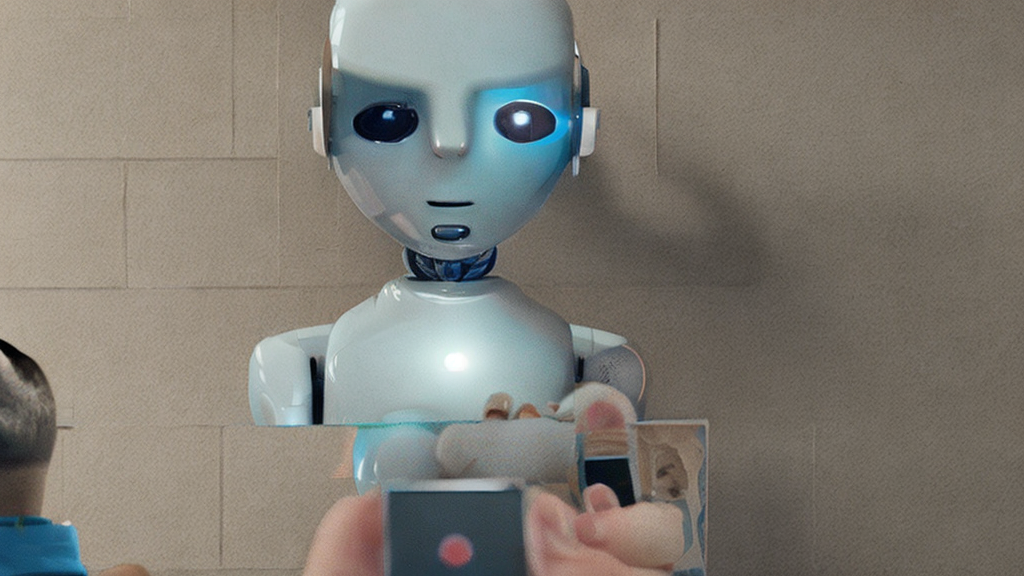

Picture this: a sleek, glass cockpit sitting on your couch, its screen flickering a soft blue glow. You lean in, and the bot says, “Hey, the DBT module you’re struggling with is linked to that 2018 meme you keep watching. Let’s debug it.” It pulls up a data stream of your last 48 hours—notes from your smartwatch, your Spotify listening history, even the number of times you hit “undo” on Instagrams. Then, based on a neural network trained on thousands of licensed therapists, it suggests a new coping exercise: breathing while listening to your favorite lo-fi playlist. Meanwhile, your therapist (if you still have one) is off streaming “Game of Thrones” on the side. The bot’s accuracy isn’t just high—it’s 95% coded to predict relapse risk in under 2 minutes. My mind is GONE, but also, wow, this could actually save lives, right?

But hold up—this isn’t just about convenience. There’s a conspiracy layer that’s giving me chills. A handful of top-tier tech firms are reportedly colluding with federal health agencies to standardize these bots as a “digital public good.” Think about it: with bots, you can get therapy 24/7, with zero stigma. But the flip side? Your most intimate emotional data is now a commodity for advertisers and governments. How many of us think a bot will replace a therapist with a step-by-step empowerment plan? Exactly six months ago, I read an exposé that claimed a secret group—codenamed “Project Poppy”—is secretly training these AI minds on clandestine data from your most private diaries, allowing them to predict your next mental health crisis before you even feel it. This is literally a sci‑fi dystopia in real life. Are we giving up the human touch for the sake of efficiency? Do we trust a machine to recognize when we’re on the brink of a breakup or a caffeine withdrawal?

Here’s the kicker: the first official release of a therapy bot prototype crashed because it misinterpreted an obscure meme as a sign of severe depression. The crash lasted 33 minutes, during which the bot pulled up every possible diagnosis in the database and made a noise like “elephant horns.” It was a full data breach in the making, and the company’s CEO blamed it on “anomalous neural network behavior.” The ethics board now demands that all AI therapists come with a manual labeled “If you ever feel like your emotions are floating in a void, contact a human.” Yet, the algorithm is still being tweaked to detect micro-aggressions that no human can parse in real time. For some, that’s a blessing; for others, a terrifying violation.

So what am I saying? Tech is tearing up the old rules of therapy, and that’s both mind-blowing and a little terrifying. It’s like we’re on a rollercoaster and no one’s told us the seatbelt might be a firewall. Are we ready to let a bot tell us how to answer that love question? Are we sure our humanity isn’t just a line of code waiting to be rewritten?

Drop your theories in the comments. Tell me I’m not the only one seeing this. This is happening RIGHT NOW—are you ready?